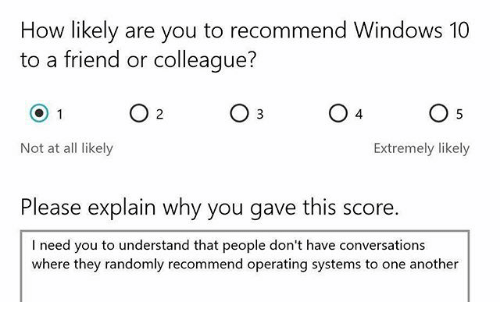

A meme going around pictured a Net Promoter Score survey question: How likely are you to recommend Windows 10 to a friend or colleague? The respondent selected “Not at all likely” and wrote: “I need you to understand that people don’t have conversations where they randomly recommend operating systems to each other.”

It’s funny because it’s true.

The Net Promoter Score (NPS) was designed to solve the problem of survey fatigue. After all, businesses need to get honest customer feedback. But customers feel beleaguered by the fact they can’t go to the doctor, call a federal office, or ask a question about their phone bill without being asked how they think the interaction went. NPS was a compromise. It replaces a long survey with two questions: On a scale of one to 10, how likely are you to recommend this product or service? Why?

Created by Rob Markey and Fred Reichheld of Bain & Co. the system was based on the idea that, if there’s one thing you really need to know, it’s whether folks are happy enough with how your company performed to recommend you—and if not, why not. It classifies respondents who give the company a nine or 10 as promoters; seven or eight as passives; and anything below that as detractors. Your Net Promoter Score is the percentage of promoters minus the percentage of detractors.

[Read also: 5 ways to better connect with customers using NPS data]

The NPS has become the predominant metric that companies use to gauge success as a brand. But, as the meme points out, the NPS question doesn’t necessarily provide the best metric for every business or every situation. There are some things that lend themselves to brand promotion—hair products and vacation spots come to mind—and other things that really don’t. Companies need to think about their business model and how customers interact with them rather than picking a standard customer experience (CX) metric and hoping it will provide an accurate picture of customer love or loyalty.

Businesses need to get honest customer feedback. But customers feel beleaguered by the fact they can’t go to the doctor or ask a question about their phone bill without being asked how they think the interaction went.

Bruce Temkin is the founder of Temkin Group, which was acquired by Qualtrics. He’s also co-founder and Chairman Emeritus of the Customer Experience Professionals Association and former Vice President & Principal Analyst at Forrester Research, focusing on customer experience. According to him, what matters is how you are using the metric.

“It’s valuable to have a single core CX metric that acts as the scorecard that aligns the entire organization,” he said. “Net Promoter Score is the most popular core metric, but not the best one for everyone. It’s just important that it is connected to the desired business results and is easy for the organization to understand. Below that core metric needs to be a lot of other measurements and data collection to help identify what it takes to move the needle on the core CX metric.”

[Read also: Measuring happiness: What’s the difference between CSAT and NPS?]

A metrics smorgasbord

Some of the many other metrics companies can track include:

-

Customer Satisfaction (CSAT)

This is similar to NPS in that it asks one question: How would you rate your overall satisfaction with the [goods/service] you received? The rating scale is from one—very unsatisfied—to five—very satisfied. Again, the top two ratings are used to calculate the satisfaction score. This tells you how a customer feels about their most recent transaction but, unlike NPS, doesn’t tell you whether the customer is so pumped they’re going to tell other people about it. In some cases, that’s okay.

- Customer Effort Score (CES)

This single question asks customers how easy or difficult their transaction was. While NPS and CES rate general satisfaction with overall brand experiences, the CES narrows in on ease of the customer experience, which can prompt companies to then take specific actions to make the experience more effortless.

Qualtrics lists some other options such as:

- Retention loyalty – how likely are you to remain with us?

- Purchase loyalty – how likely are you to continue buying from us?

- Meeting expectations – how much better (or worse) was your experience compared to your expectations?

- Waiting time – how long did you have to wait in a call queue? etc.

- Online – how easy was it to find what you were looking for?

There’s also customer acquisition rate, conversion rate, abandoned shopping carts, resolution rate, ticket backlog rate, first response times, customer reviews…

Of all the feedback systems, one of the most specific is still that which customers take upon themselves—making the effort to leave feedback. Temkin did a study that showed that customers who have very bad or very good experiences will more likely give feedback directly to the company than post about it on social medial or third party rating sites. And they’re more likely to share positive feedback through online surveys and negative feedback through emails.

[Read also: The psychology of rating: It’s hard, but better, to be honest]

Of those who do post about the experience, Generation Z and Millennials are most likely to use social media; older consumers tend to use third-party ratings sites. However, a review’s value has become increasingly dubious. While Gen Z and Millennials rely heavily on recent reviews in making their decisions about what companies to do business with, there is an increased number of fake reviews—dirty data that must be cleaned before it’s of any use in improving the customer experience.

What to do with CX data

Useful customer service metrics have to provide clean data that supports a particular KPI or initiative. They must incorporate how your customers interact with you; what you’re trying to improve or solve; and what is the most effective way to understand the truth of the customer experience in that arena.

But the metrics also need to inspire action from a team whose job it is to implement changes in response to that feedback—whether it’s making adjustments to the website interface, customer support protocols, or to the product itself.

“The goal is to have a metric that lines up with your business objectives,” Temkin said. “If you are looking to reduce churn, for instance, then a churn measurement could be a good overall metric. But the key thing is to make sure to have an overall program where you listen to customers, analyze their feedback, and disseminate the insights to people so that they can take action to improve the metric.”

[Read also: The trust economy and why it’s okay to get a bad rating]

To make a difference, he has written, customer experience needs to be a systemic, sustained effort for businesses. It’s a journey, not an event, that must be “embedded in their operating fabric.” So the motivation has to come from the top and there must be specific people whose job it is to monitor and grapple with customer experience data on a regular schedule.

Temkin’s research predicts that a modest improvement in CX would gain a $1 billion company an average revenue increase of $775 million over three years. And yet, a Harvard Business Review study showed that only 20 percent of companies respond to most customer feedback, and 50 percent said they responded to “some.” Twenty one percent said they respond to very little. There are many reasons for that, specifically: “organizational silos, lack of systems or data standardization and integration, data quality issues, and inconsistent collection of data.”

“The most common mistake is overly focusing on the metric, instead of the overall improvement process,” Temkin said. “The goal is not about a number, it’s about putting in place a system for continuously learning, propagating insights, and rapidly adapting. When companies focus too much on the metric, they can incent poor behaviors such as gaming the system, debating the veracity of the measurement, and complaining about the data.”

Temkin has his own CX model that seems to cover most industries:

- Success: Degree to which customers can accomplish their goals

- Effort: The difficulty or ease in accomplishing their goals

- Emotion: How the interaction makes customers feel

It’s the last one, he said, that most powerfully drives customer loyalty. But it’s the combination of the X-data with the O-data that yields the most actionable insights. X-data includes customer feedback from scoring data and reviews to recorded contact center interactions. O-data is operational information like how long the person has been a customer and their purchase history. Following the customer journey can help tie both in at every step.

“That will be a goldmine for predictive analytics…we’ll be able to better understand the connection between our actions with customers and the resulting business impact,” he said.

Every interaction between humans can trigger thousands of neurons—everything from “tone” to speed to keywords. If we’re going to get really good at customer experience, we have to hit all the buttons at once, for every customer. Let the journey begin.